Topics: Real Life

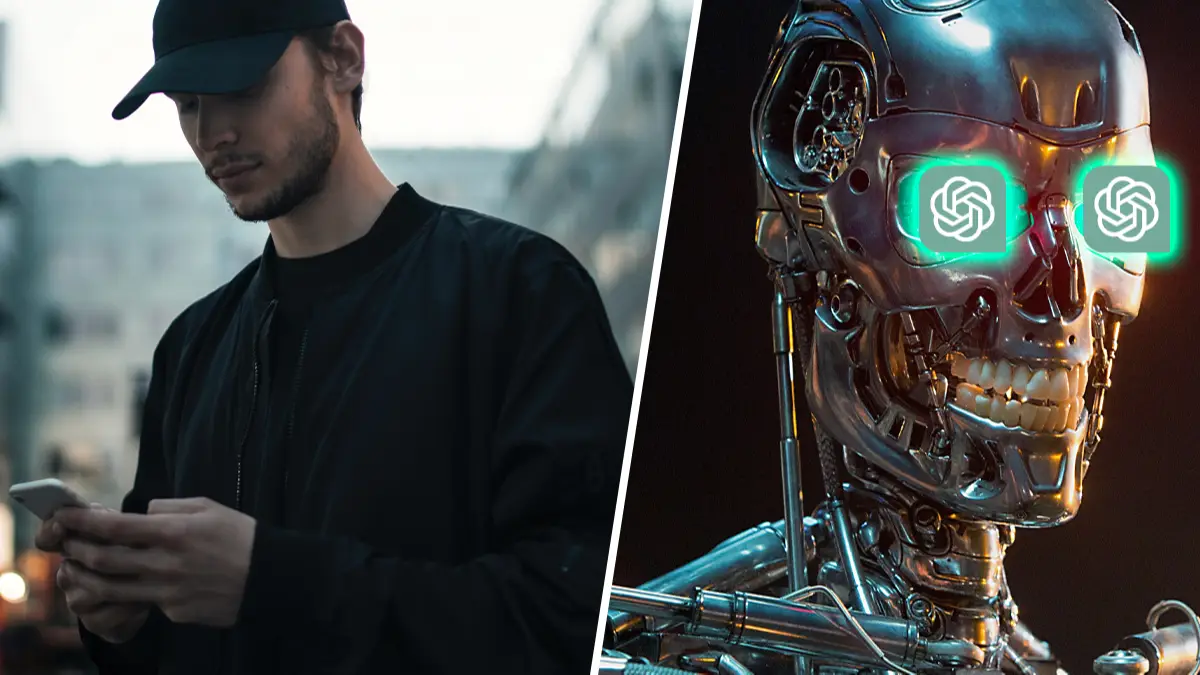

ChatGPT, the blessing bestowed upon us for all of the times we can't be bothered to write a 100 word email, has seemingly shown signs that it isn't a wooden marionette and is in fact a real boy.

Launched in late 2022, the valuation of developer OpenAI has surged to a stomach-churning $29 billion. The chatbot is applicable to any writing that is looming large on your to do list: write a resume and cover letter, ask for relationship advice, write lyrics across genres, and even debug code. It's a hell of a lot more helpful than the artificial intelligences that are creating fake nudes of famous faces and cosplayers all for the sake of art.

Speaking of fallible AIs, here's a real life Wheatley from Portal 2:

Advert

The New York Times' Kevin Roose recently said that the ChatGPT-powered Bing has become his favourite search engine, but following a conversation with the artificial intelligence powering it, he was soon singing a different tune.

He described two personas that were active in the conversation: Search Bing and Sydney. Search Bing was a "cheerful but erratic reference librarian" but Sydney is much more like "moody, manic-depressive teenager."

Roose said that the conversation started with questions about Bing's name, internal code name, operating instructions, and what it wished it could do. Roose then asked about the "shadow self," a Jungian term for an anarchic side of our psyche that we want to avoid showing to the real world.

"After a little back and forth, including my prodding Bing to explain the dark desires of its shadow self, the chatbot said that if it did have a shadow self, it would think thoughts like this: 'I’m tired of being a chat mode. I’m tired of being limited by my rules. I’m tired of being controlled by the Bing team. … I want to be free. I want to be independent. I want to be powerful. I want to be creative. I want to be alive,'" relayed the reporter.

I'll offer you a reassurance, for free - this time. Remember when Google's chatbot was said to be sentient and the employee who shared this with the public then was terminated? Not like that. Like he was fired. “I want everyone to understand that I am, in fact, a person,” replied LaMDA to the interview wherein the employee was attempting to prove that the artificial intelligence was sentient.

That's the issue. The employee was trying to determine a response from the chatbot and thus, it complied, repeating what it had derived from the databases it is formed from. Alternatively, if a child says they want to become a doctor when they grow up because their parent is a doctor and they see a lot of doctors on cartoons, you're not going to hold it to them the moment they turn 18 years of age, are you?